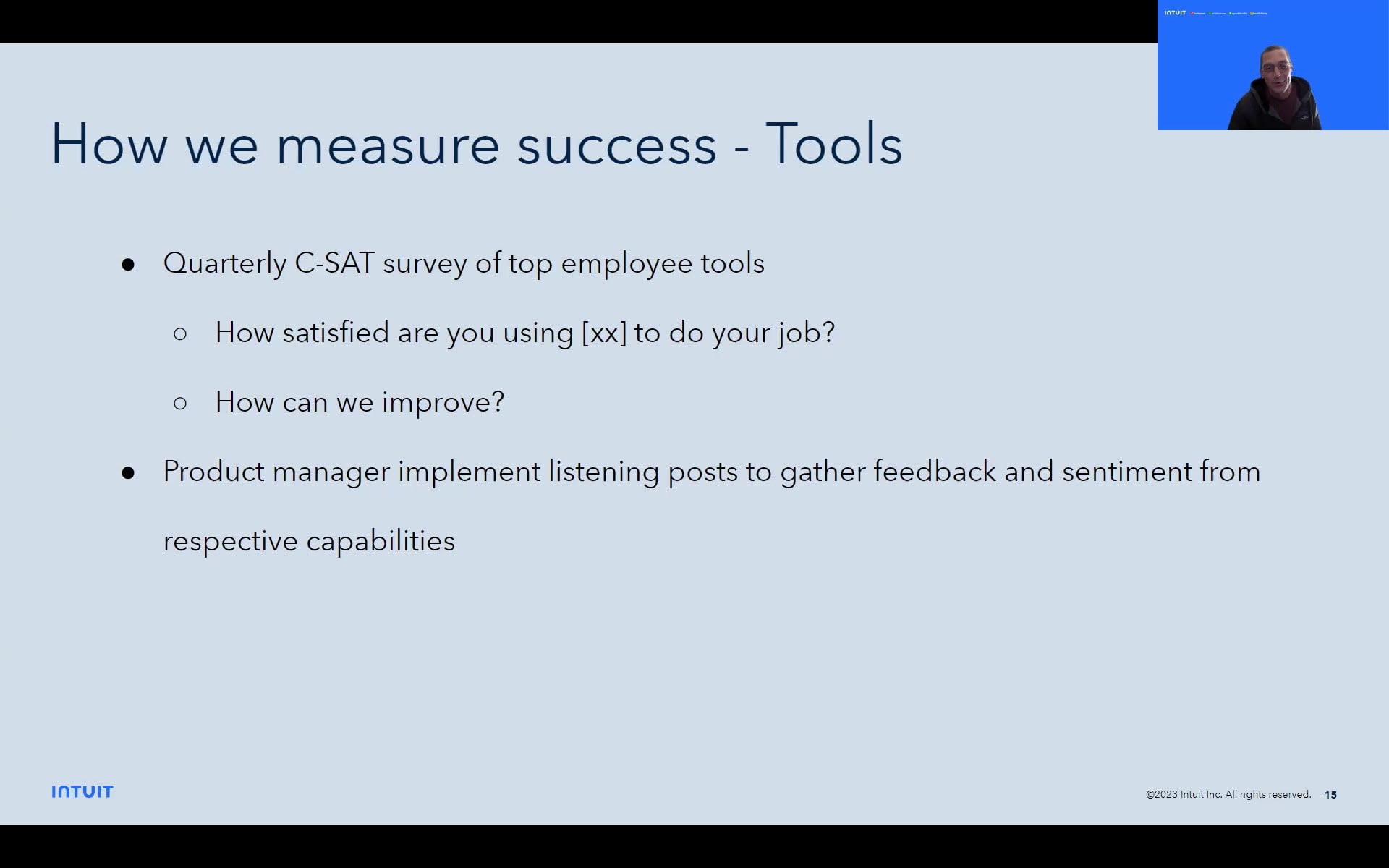

Welcome you all, that have joined today. For those of you that don't know me, my name is Juanita Oguin. I am a product marketer. I lead our platform solutions at Coveo. I actually this is my second life at Coveo. I was here a year and a half ago for a year and a half building out our workplace line of business. I'm now back, with a little bit of a bigger and broader role, and so I'm really excited to be joining you all today to talk about digital workplace. And I am super super thankful to Colin and Wendy for agreeing to share their Internet use case, which is, I think, especially important considering that we don't often get to see, behind the scenes at companies, internally. So really appreciate this knowledge and peer sharing today. I do have a few slides before I hand it over to Colin and Wendy. So as people come in, hopefully, you get comfortable with turning on your video. So let me just move to the next slide. So a few key points for today is, this is gonna be the first of what I hope are many peer based roundtables, especially for our digital workplace group here. Based on how valuable you find it today, this is my email. If you ever wanted to present at a future, roundtable or if you have topics that you wanna ask your peers, I'm always accessible to you. So, you know, please take note of that. Today today's session is gonna be recorded. We will be sharing it with the attendees only, for learning purposes. So we we ask that you kind of refer to it, you know, keep it internally as well. And lastly, we'd we would love to have you join on video. This is meant to be more of a closed peer based group. And especially as we get to q and a, we'd love to see all of you on screen. So please do turn on your videos. We'd love to see you. No pressure, though. And then lastly, we, ask that you put your name update your name in in Zoom, so that we have your company name so everyone knows, like, what company you're representing. You can see I put Juanita from Coveo. So those are just a few key points for today. I think I see a chat. Thanks, Ahmed. Ahmed, on my end, Ahmed is my colleague in customer marketing, so we'll be taking care of you today. So moving forward, just a quick agenda from my end. We'll do a quick who's in the room. We'll do a state of the world from an AI search perspective. I'll ask you a quick question on the poll, then I'll pass it over to Colin and Wendy, and we'll close it out with some of our upcoming events that maybe you'll be at. There's both virtual and in person ones. So to get started, you may not all be in the room today, but we had, twenty seven registrants from all of these companies. So a well represented group of companies and very notable logos is the way I'll say it, but even more so, I think you should all be proud that you are workplace leaders really trying to make change in your organization, which is always not the easiest thing to do because workplace and employee experience and all of that is always a little harder to measure or to show the impact. And I think we all know how important it is, and so I just wanna say thank you for joining us. Thank you for being those progressive leaders for your company, and I hope we get to learn from each other going forward. The other thing I wanted to share with you is, the funny kind of state of events we find ourselves in when it comes to AI search. I wanted to start with this point, which is we did a external third party research report, to pull six hundred tech professionals on their views on search and AI. And there was this one stat that you see here that was quite interesting, which says that eighty four percent of tech professionals see search as critical to digital transformation, but they have a hard time engaging management about search. And so that was something we saw, a couple of months ago. Fast forward to this year when everything went a little crazy. We see Microsoft CEO, calling AI powered search the biggest thing for his company, since cloud fifteen years ago. So very big leap from tech professionals saying hard to engage management. Now you have the CEOs, right, c level saying it matters. Not to mention well, that was driven by ChatGPT. But fast forwarding to, you know, I did a quick results for ChatGPT enterprise to say, you know, it's the killer enterprise use case for knowledge, many other things. Everyone's investing in this. But the core is that, you know, it kind of all points back to AI search, which are the things you all as, I guess, Coveo customers have been investing in. So it puts us all in an interesting predicament. So we can chat more about that another time, and you'll hear more formal statements from Coveo in the coming weeks about our views on it and the fact that we actually do use large language models. We just didn't talk about them before. But now that chat GPT is here, we have to talk about them. So you'll hear you'll hear more from us in the coming weeks, but I wanted to share these kind of few interesting, you know, insights that happened over the last few weeks and months. Lastly, before I kind of hand it over and open it up, we did wanna just run a quick poll for everyone to see, what are your digital priorities over the next year? What are you really focused on? So, Ahmed, do you mind just running the poll, and maybe we'll take a second to get people to, respond or share more? But what are your digital priorities over the next year? Is it Internet focused? I would have only put chatbot, but now that chat GPT is here, I kinda put them together. Is it more on the self-service employee self-service support side? Maybe it's more modernizing infrastructures and moving to more headless or composable UI frameworks, analytics. I know it's always important. And then if we didn't put something here, do let us know if, you have something else that you're really focused on over the next year. We'll give you a second to answer, please. Juanita, it looks like panelists can't vote. Oh, you guys can't vote? No. Okay. I'm sorry. Well, that's fine. We can just you can just choose from options one to five, and then we just note that. Oh, yeah. How about you put it in the chat? Yeah. Which one? Thank you for calling that out, Rosanna. Alright. Let me I'm gonna can we end this poll, Ahmed? I'm gonna get that off my screen. And then I'm gonna stop sharing because hi, everyone. Good to see good to see you. Okay. I'm looking at the chat. Oh, sorry. Do you guys need to see the items again? Sorry about that. That would be helpful. Okay. Do we see any trending here? Ahmed, Colin, Wendy looking for you now as I'm trying to multitask. Three. I see three six. I see one three five. A little bit of everything. Access to knowledge to support our customers. Thanks, Brian, for that. Analytics from Lozana. Okay. Well, that's the lesson learned. We will, not ask you a a question while you're a panelist on the poll next time. Okay. So we have a little bit of a mix. It'd be great to just hear from you all going forward. That way we can sort of cater programming to you as well, moving forward. But with that, I think what I'm gonna do is turn it over to Wendy and Colin to share more about their Internet, use case, story, and success metrics. We'll be here on the background, in case you guys have questions. Feel free to post your q and a in the chat, but we'll open it up to have dialogue after Colin and Wendy kinda go through what they have to share. So with that, I'm gonna pass it over to the two of you. Thank you. Let me get the right screen up here. Not that one. How about that one? Thanks, Juanita. So I'm Colin Wright. I'm a Internet and search product manager here at Intuit. Joined with me is, Wendy Sui, who's our lead developer, both a friend and partner in crime in the work we do. So thanks, Juanita, for giving the opportunity to speak with the group here, and I appreciate y'all for your time as well. So Juanita sent me some provocations kind of leading up to this, and so I'm gonna talk through a few topics here for the next, fifteen, twenty minutes to see how it goes. And, and, hopefully, hopefully, we have some time for q and a, but the way Juanita keep it off is pretty pretty relevant. So I hope you guys find this also relevant and informative. Can everybody hear me okay? Just maybe quick thumbs up just to make sure I'm contracting. Okay. Thank you. Thank you. Okay. So this first statement here, run on sentence here, is, truly a mouthful, but, it's pretty descriptive, I think, of, like, what we do and why. And so the thing is is, like, the digital employee experience is all these things. It sort of depends. You know, I ask myself, like, how hard is it to do my job? How cumbersome is it to do my job? You've got all these different employee types doing different works, seeking different outcome, looking for different information. And so it's hard to kind of put a, you know, put a pin on what's the employee digital experience because it's all the things. You know, it's like a death by a thousand cuts, so to speak. So it's, you know, it's like how all these things add up is the digital employee experience. I think as IT product managers, you know, we sort of need to balance the capability needs of our employees with, you know, like, the security and standardization needs of the company, because technology changes quickly. That'll become a theme here as I talk. And so we need to sort of ensure that we can do both. And in order to do that, I'm gonna think it takes a team. So I'm gonna show this slide while talking through a little bit how we resource. Yeah. How we resource this, you know, strive to sort of achieve this vision here in the middle. So we, you know, we have a product management team. Selfishly, I'm a product manager. So we, you know, often drive requirements. We obviously evaluate new tools. We collect feedback, performance metrics, from employees. We run a lot of the day to day. You know, we're we're sort of the hands of the wheel for a lot of these tools and capabilities. But we also have solutions architect. I'll preface all of this to to say, it's a small team. It's sometimes one or two people, but, but they, you know, they have these responsibilities. So we have a solutions architect. They'll oftentimes seek out, as well and review and approve new tools that we, bring into the organization. They're also providing guidance and, like, development patterns, like, new, you know, new development patterns, that are approved in the company. So they're bringing their technical expertise into the mix. We also have user experience team who's dedicated to their research and design. They do things like follow me homes, in-depth interviews, user research studies with employees, And they provide brand guidelines for things like logos and mastheads for niche applications, but also, you know, component designs for any custom development that we do, and user experience floats. Excuse me. And then we also clearly have a development team. Wendy's on this team. Development teams, they they do a lot of custom development, but but also just, like, point to point integration. They're they're doing some operations for lots of the tools that I'll show here on the next slide, as well as automation. You know, sometimes doing good automation and integration takes takes that work out of the employee's hands. Right? It makes their life easier. And and then finally, we have a change leader, who we refer to for, obviously, you know, sort of change, new tool onboarding, ongoing training for tools, communication to employees for changes, updates, these kinds of things. So, we it you know, we do have a lot of people staffed against, that employee digital experience. So, you know, we're we're always thinking about each other's solutions and how these kind of come together as one so that, you know, you're driving consistency and familiarity within tools across the ecosystem as well as being secure and sort of cutting edge. Like, we wanna we we wanna embrace new tools as they as they come in, but also do it in a secure, you know, server. So you get here this, kinda capability hierarchy. So this is actually just an example. I wouldn't quote us on any of these because these all can change at any given time. But you could see where we sort of think about or how we think about the the application and its importance or its level of importance to employees, and therefore, kind of, again, stack resources against them. We we do think, kinda bringing this back to search and Internet, you know, we do think that that, you know, this this digital web, clearly web, experience, relies on some central tools that, you know, kind of are your off on ramps, and discovery tools, to to find all these things, ways to get to training and to get to, you know, to access tools. We we we know lots of people just use search as navigation, right, just to get to these tools. And so as much as we wanna think people are spending all their time in our Internet, or search, they're not. Right? They're spending it in all these other web tools, and we just need to make sure that we're providing the right clarity and on ramps to get to. So I think, you know, how we integrate and make efficient getting to and learning about these tools makes, your productive employees. So this next bit is about, how we treat knowledge management and, using Coveo tools to, drive maximum results. So this as well, you know, takes a bit of work. It's not just, you know, you know, spoon feed the articles in Coveo works. We, you know, we do have quite a few things going on, on both ends. So we we've got a a few knowledge bases, that have a little bit different templates, but all of them have applied some level of KCS principles. I'm not sure if David k would agree, with all of our bit, but, you know, it's this adapted KCS. I'm sure all of you guys are familiar with this. Certainly, predictable articles that we can index and understand. We do use some metadata in the articles. So this is different than the user criteria groups, but it's, you know, metadata values that we can adjust and work with. On the Coveo side, we obviously have a connector. We bring in security identities, which includes the user criteria groups with ServiceNow. User criteria groups are like more refined permissions. I'll just put it that way. And it's it's been pretty effective for us. We also have, a few extensions that we apply in Coveo. One, so that we can update the clickable URL, basically, so we put it I'll show it here in the next couple of slides, you know, how how that manifests in our Internet. But we update that. We also, zoom in on a specific set of knowledge blocks as they're, put together in ServiceNow. And then we also do some, kind of content cleansing, for smart snippets, which we'll also show here in a demo. We have, like, a a just kinda one standard, automatic relevance tuning model that runs across the the query pipeline, and also, smart snippet model that runs across the query pipeline. We're choosy about what we put in the snippet model because not all the content, obviously, is shaped in a way that makes sense. It's part of the reason why we're manipulating that a bit with the extension. Then we have very minor, like, regional and worker type result rent rules, but I I always say, like, don't get carried away there. Don't boost and overboost and get carried away. So we we just have some kind of minor, result ranking. So seizure warning. My next three slides are, some GIFs, and I I noticed these kinda run, case or not not in the right state. So I'm gonna let these run for a bit while I talk through them. So this is the search for annual enrollment. Here, obviously, I'm doing a snippet, but we also have this smart suggest. Here, I'm clicking through the annual enrollment. I missed annual enrollment article. Now it's actually loading in our Internet, but the words that that exist on this page come from ServiceNow. So we're doing, like, a a direct, call back to ServiceNow to render that article. Part of the reason we do that is we can customize the page experience after the fact. So here's another one. It'll roll through. Sorry. Here's another one I'm doing a search for Jira access, which will start over here in a sec. Based on what we have indexed in our smart snippet model, it's pulling these. But, also, I wanted to show we're doing a result template specifically for Stack Overflow. So we have, like, a nested results scenario here as well. This allows employees to find answers really quickly. Next one is my favorite. I use this all the time. This link is always purple on my search results. So, Slack guest access. I deal with a lot of vendors, so we bring them into our Slack. Here, we actually index the ServiceNow forms. And this one's kind of interesting. I'll answer questions about it later if we need, but, I'm able to basically replicate, you know, one form across a hundred different applications for this, like, access request, using search and search extensions. Okay. Sorry about this. So looking ahead. So, it's a good lead in from Juanita. Like, you know, content still matters. As much as we look at these new tools like generative AI, obviously, this is the sort of future of search for us, but we really need to understand it. Understand it for what it is. We've seen some scary things going on. So you still need facts. You still need well formed content. I do I mean, you know, I I just I can't say enough. Like, need good governance models, good well formed content. And even, you know, even with KCS practices put in place on our ServiceNow knowledge, we're still having to massage that data a bit on the search, you know, back end so that they work well with these kinds of AI tools. So just have respect for what it is. Which actually leads me into this next bullet, which I'm sure, I'm just sure, right, that we're gonna see in the enterprise. We're gonna see HR and legal. You know, questions come up for this. Right? Being like, if we don't know what it's saying, then that's a problem. And so I'd be really interested to see how this progresses with Coveo and and how we work through this because it's sorta easy to put this out there in the wild and then say it's no big deal. In the enterprises, you know, this matters. So, yeah, I'm I'm really interested to see how this, you know, moves forward. With that said, we're we're also testing with this. Wendy has built an OpenAI, you know, chatbot. So we use the. So we're we're we're all over this, but I just I know there's gonna be some some backlog. With that said, though, discrete answers, this obviously is our future. Like, this is gonna be the expectation that everybody has. Just like all of you search practitioners heard, why doesn't my search just work like Google? Well, I think it's going to be, you know, finally, just give just give me the answer. So I I just I know that's gonna be our future, and we're gonna have to, you know, move, diligently towards that that vision. Okay. So this one is more really about the headless implementation we recently did, which my my the demo's, slides that you saw a moment ago, that's all using headless. That's all our new new search implementation. So this really boiled down to we couldn't use, JavaScript. JS UI wasn't an option for a development platform. One of the questions in your poll, Juanita, was people working on a new Internet. We are working on a new Internet. So it's a priority for us. But this this particular tool is react based presentation layer. It does have, like, kind of a Barca end CMS. We could tap into workforce data. It has its own personalization engine, which we'll use in conjunction with Preveo. This is more like your home page greets you in the morning. It you have widgets that are personalized to to you. But it it really still goes down to, like, these jumping off points. People aren't gonna be hanging out on their, you know, inside home page. They're going there as utility to get to where they need to go to do their job. Yeah. And then, the also this headless approach lets us standardize, you know, across we're implementing Coveo and a couple of other, key tools. And, this gives us kind of a a standardized approach, all implemented in this in the same global web. Okay. I'm almost done here. Sorry. I keep drawing on. So how we measure? This is tricky. Yeah. This is tricky. But I'll say for first, all the tools I mentioned earlier, what we do is a quarterly, customer satisfaction survey, and we we measure twenty five percent of the organization and in a representative fashion of the group. So I actually, you know, understand job functions in the company, and then I survey accordingly to, you know, the right percentage of people. But I asked two questions. How satisfied are you using the tool, whatever it is, to do your job? And I think that's very specific. Right? In the enterprise, employees often don't have choice. You know? So you can't really ask them. We used to do NPS. Let me say that. We used to do NPS. And you can't really ask them how would you recommend this to a friend. So it it's like very being very deliberate about this question, which is, can you do your job with this tool? And then sort of yes or no. Or rather, excuse me. It's a five point scale. And then, hire and improve. So we we get this. Product managers, review this quarterly, but we also have other listening posts, Slack channels, in product feedback, you know, depending on what it is, what tool it is. We can gather sort of feedback in the moment, which is oftentimes better. And we, you know, sort of capture that. So going back to the earlier slides, like, we sort of capture that and evaluate and review how how we can improve any of these tools or the onboarding or training or the you know, what just get a new tool. You know? Like, this is when is it working? That that's how we, you know, sort of manage this. Search. Get specific to search. So so I wanna be quick because I do wanna leave time here. So, you know, I don't wanna discount search, you know, things like search volume, average click rank. These are great. We still look at them. I look at them every day. However, some of the standard metrics sometimes don't sort of represent things like non clicks. Like, I didn't click. Like, maybe you saw a snippet. You've got your answer right there, and you didn't click or you didn't need to. Then this idea of, like, I'm clarifying searches. I might modify my search. And so we developed a metric, that kinda looks at the search session a bit and tries to determine in a better way, a more or more responsible way. Let me say it that way. That, that gives us, like, a single number. So the idea here was, sure, you know, we look at we look at, like, the deeper search metrics. I mean, I literally scroll through, you know, miles of search terms. But our leaders aren't trying to do that. And so they just want to know, oh, yeah. Is this working? How is it working? And how is it working over time? So that was really the purpose of this metric. Okay. With that, I'm gonna I'm gonna, introduce my friend again, Wendy. I think Wendy has a quick, demo. Wendy, I'll continue to share. I'll just, flip the slide and just let me know. But with that, I gotta give credit to Wendy. We based this on, mean reciprocal rank or MRR, which, some of you might be familiar with, but it's a a a kind of expanded model there. So, Wendy, if you don't mind, take it away. Thanks, Colin. So, yeah, so the problem we're facing is that, first, we have a lot of metrics to value search performance, but which one should we most rely on keep reporting? And second one is those traditional method are not really working with really a powerful search engine like a will because people might not need to make any clicks at all. So with that, I'll walk you through example if I can go to the next page. Yeah. This is actually in the middle. Let's wait a couple of seconds until we we start from the very beginning. So what I'm trying to demonstrate is that a single search session. For example, right now, I'm looking for our CEO's email. So I search for his name. I may find the email right there. I do not need to click anything before I move on. I then search for next topic, withdraw from ESPP. Similarly, right there with a smart snippet, I do not need to make any clicks at all, and I'm able to get information. So after I absorb all this information, I move on to a third search, which is ping ID, and it is only here I need to make a click to an article. And if if if we can go to the next slide, we can see a traditional method looks each of these queries, Sasan, withdraw ESPP, and PingID as individual events. It's not really taking consideration of the relationship between those, and that is why it requires a click or nonclick and the location of the click to tell us how good the search engine is. But as to say, no man is island. Right? So in in a way, no search queries island as well. So by looking at the relationship between this consecutive queries between Sasong and withdraw ESPP, between withdraw and ESPP, we can really see how the intentions of the user vary from query to query. Because Sasong is is a people withdraw from ESPP is more of a process. They represent vast different user intentions. Even though the first search of song did not give us any click, we can safely say that, the user have find the information. So it is by looking at this relationship between consecutive queries, we are able to indicate whether the search engine has been able to provide the user with the right information they need. And, this is something that a machine learning model is able to solve pretty well in a natural language processing space. So since our search is already powered by AI, in this approach, our metric evaluation system is also AI based as well. And, that's the new metrics that we have been using to calculate our success. So with that, I think, that's the end of our slides. And, Colin, unless you have anything to add, we I think we're open for a q and a. Nope. That that's right. I think, that was the end of our, a bit. So any any questions? And, I haven't been looking at chat. I'll be honest. So Yeah. Great job. Thank you both for sharing. There are a few questions. I figured we just go down one by one if you are all okay with that. Brian, I see you you came off a video. Should we start with yours? Brian asked on mute. Yes, please. No. I I appreciate it. Thank you guys for that demonstration and that conversation. That was very, very, enlightening. You talked a little bit about how you leverage, KCS principles and you have content kinda segmented out into four different knowledge bases or or arenas, if you will. We actually have, like, seventy different silos of knowledge, if you will, but we do have a a sharing model as well. Right? We share with the enterprise for the most part. One of the challenges we're finding though is with about forty thousand users is how do we really segment that user population to allow those machine learning models to really promote themselves. So looking for any practices you guys might have there as far as how you May distinguish an employee from another. We use user attributes. We're we pass a a little payload of, like, context user context along with every search. And so then we use user attributes. So things like job function, certainly region, worker type. But then the content sort of has to be labeled in a way that that allows you to to do that. Right? So but then but then you can sort of marry that with some result ranking rules. Did you say seven or seventy? We have seventy. Oh, seventy. So seven zero. Yep. A lot. That's, that's a challenge, my friend. Yeah. We could we could talk about that. That's a and and that's a wild challenge. You know, I I would say, you know, normalizing the the information first. Right? So that that, you know, it's like to the index, it sort of looks like, you know, more more consistent data. And then using, you know, contact query context. So as they're as they're doing their search, if you're able to pass something some information about that user, then you can scale that, through result ranking. Sure. Yeah. That makes sense. Thank you. Yeah. We have obviously role access to each of those, quote, unquote, domains that we use. Right? There's seventy different ones that are out there. So that's one parameter we do pass. The challenge we have is with that context of the user is maybe our HR systems are not on the same wavelength as our knowledge base. So a lot of it's not really a one to one per se. Right? And people that might have access to multiple of these domains might have the same, contextual elements in their profile. Yep. So yeah. That's a tough challenge, man. I mean, it definitely like, we we experienced this as well. Like, having that common thread of metadata throughout your tools and, you know, the the workplace data, that's what gets it. Right? It's like you gotta do that first because then then it becomes a a bit easier to surface in a way that's meaningful. Yeah. Right. Good deal. Thank you, ma'am. Rosanna, can we pull you into this one? I think you had a question. Well, just on this conversation that happened right now, Colin, do you all have, an enterprise taxonomy to stitch the metadata together between these and, like, some sort of platform for that? Because I know that some organizations have looked at that. Sure. But I I wonder how that works with Coveo too. I'll answer that. Yeah. Jordan. Actually, one of the reasons we have several different knowledge bases is, not at all teams are resourced in the same way. So we have an h HR taxonomy. We do we do have that. We don't have all the things taxonomy, and that's, you know Yeah. So it it becomes less useful there. We we do have HR taxonomy. I think tools wise, we probably use tool party. I think Oh, okay. Yep. We don't like, you know, you know, taxonomy to me is actually more helpful for analyzing data than it is for surfacing things in search. Like, people don't use the words you're using in your taxonomy. You know? Like, maybe. Right. But not. And so to me, taxonomy is more of a of a threat for analytics, for what it's worth than, it is like a search aid. Yeah. It it can kind of, like, create the the knowledge graph or relationships between The work. Terms for you, like, ahead of time, but Coveo does a lot of that already in the machine learning. So okay. So the question I had in the chat, I love everything that you're all doing, and we wanna do that too. And what we find is, we have a challenge with resourcing on our end. So my question is, like, how are your teams structured especially around the content governance to have that in place? And is that, you know, the content governance over your knowledge base is part of the same organization that's managing enterprise search and and your product managers, or is that separate? Okay. No. They are separate. They are separate. And so, definitely, we get the most, you know, sort of skin in the game from tech and HR. So tech help, you know, replace my monitor, these kinds of and HR help, obviously. We get most skin in the game. Legal is really policies. But in but they don't you know, they're not, like, massaging content and, like, looking for optimization. They're writing policies. And so we just index those and what it is. So they don't have really, like, an engaged governance team. They are moving over. So so not all the teams are are created equal. I I will say HR, our side, has a really good process of governance. They're looking at the search data. They're looking at, you know, case data, you know, coming in, you know, contacts and, article views, coming into the organization. And then making decisions about how to, you know, maybe it's a synonym here or a, you know, the source rule here or it's a content optimization here. And so they they they do. They're doing that weekly in fact. Do they is there, like, a central KCS program manager for all these teams, or is it, like, managed on each team? Okay. Yep. Team is a little bit a little bit of a a silo. I hate to say it. Yeah. You know, I I think that they've all they've all come to understand and center around KCS. But to your point exactly, it's like they're not all resourced the same. And so I can't have five people doing content. You know? I've got one. So what do we do? Exactly. K. So, Timon, do you wanna join the conversation? And hi. I don't think we've met personally, but thanks for joining. No. Thank you. I'm I'm excited to be here. And, Colin, you and I have talked connected before. Yeah. Yeah. So we are we were in the same boat, like, with Covio and Lumapps. So we did connect a little bit before. And, hello to everyone here. I'm the product owner for enterprise search, from Workday. So we currently, we are focusing on intranet. And, so we are in a situation where we have our company intranet. We have a unified, search there. But, you know, we have very limited sources, like only the enterprise sources, that are available there. And then there is a stand alone, enterprise search tool on its own, which has everything else, like, you know, Jira, Confluence. So, the the reason we have it is because it's company intranet. We are very careful what goes in there, and, content needs to be governed. So any addition of new sources on the intranet, needs to be governed before they come in. Right? So but then now there is also the challenge. There is a standard tool which has anything and everything. Right? So, that is the power of search. But at the same time, it does, have some security privacy concerns that, there is so much of information. Like, it goes all the way back ten years, and you have content over there. So, there is a plan right now that, you know, we need to have that go forward strategy for the standalone tool. So content governance is definitely top of the mind for us. You know, who are so our high level metrics, you know, based on these orgs, which are the orgs, what sources are they using, that that is part of our discovery. Right? So and can we let's say Barca team uses these sources. Can we have those govern and bring it to the company intranet? And, you know, so we we are gonna focus on each of the high level logs, what sources are we using, and govern them and bring them back. And, but then, so, you know, my question, to you, Colin, would be, is everything done in the back end for you guys? Like, maybe I I posed a different question on on this, on the chat. I'll come to that later. But, what challenges do you see for for content governance there? Well yeah. So a few things. So I wouldn't be too scared about I mean, we have twenty three data sources in our Internet. So I don't, you know, like and we don't actually have, too many or even result ranking rules. Like, we don't have to manage our great pipeline all all that much. We just know where, like, the good data is. Like, the good like, I don't know. Yeah. Like, where where the real information like, our our Internet and our knowledge articles, those get a little bit boosted because those are facts. But then there's all this other goodness that's out there that people are finding every day. And so I wouldn't be too scared of, like, having a a one kind of one large index. So but then, yeah, content governance, obviously, there. You have to know your know your resources. Like, know, excuse me, like, your connectors. Like, what are you tapping into? You don't wanna tap into garbage because that's just gonna bring back garbage. Mhmm. But I also wouldn't be afraid of some informal content. We have a lot of Wiki. We have a lot of, even LumApps for us is more of a community and sort of team site stuff. But there's so much goodness in there, that I, you know, I didn't even know about, but you can surface it in search. And so I wouldn't be too afraid of letting Coveo work itself. You know? It it it really it really has done a good job of servicing the primary resources while also, you know, shedding light on some of the sort of other content that's out there. Let's put it that way. K. Makes sense. I mean, now for for us, we have we are super, careful, like, what goes on the Internet. So that leads our journey for personalization. Right? You know, based on my high level org, right, I use these sources and make that available on the intranet. Right? So not just intranet is not just for consuming information, news, content, and go away from there, but it's also for our workmates to, get their jobs done. Right? I mean, they need to start their day on the Internet. And, maybe, that is where, we are leaning towards that, the, exploring options for personalization, like, you know, or give the user, you know, some config options there, like what they wanna pick, what they wanna see. So that is where we are going with. I had the other question on the metrics. Do you also look at content gaps? Content gaps. Content gaps and mhmm. Unfortunately, we don't, and and here's why. So I would, I I would really love for this to work for us. But we actually, we in our interface, we treat people search and content search a little bit differently. So when when somebody conducts a search on our Internet, it's actually sending two searches. One to content, one to people. And for that reason, I the the the both gap both gap results are wrong. You know? Gap reports are wrong. And so I work around that a few different ways, but I don't I I'm not able to use the gap resort because, technically, we have two search engines that are being performed at the same time. Okay. Okay. Makes sense. We do use content gaps a lot. That is other than the yes. That you mentioned. Right? We do benchmark as well. Each of those, like, click click through, click click rank, and content gaps. The adoption that it's like how the volume is we don't benchmark that because the intent is not to use it heavily, but when you need it, it's them. Right? You find the car. So it's just, an adoption indicator. But others, we do benchmark those. We, we looked at the industry standard and Coveo, helped us with that, you know, recommend some of the industry standards. But based on, the the the we were pretty new to, Coveo and, you know, based on where we were at, our our our current state, we decided, okay. Let's look at our history and, you know, this is what it shows like, and this is how we'll benchmark Barca, and we report that out on a quarterly basis as well. I'd love to hear, how others do it and what are their thoughts on that. Yeah. Same. How, Rosanna or Brian, how how how are you guys measuring or or or articulating performance of search? Yeah. That's a good good question. Colin, we're a lot like you. Our analytics, if you went and looked at our numbers, our content gaps, our click through rates are through the roof. They're amazing. They're they're, you know, eighty percent click through rates. They're, you know, two percent content gaps. But that's not the story, and we know that. And if I will listen to my users, my customers, they face challenges. A lot of it is a change management practice. Right? Getting people more comfortable with search, breaking old search habits. Our previous knowledge base, people would search by the article title itself, or the article number, for example. Right? They just had a quick shortcut to get to it. Yeah. We're trying to to develop that practice of searching. But it's hard to tell that story sometimes when the the numbers tell one thing, and what my leadership team is hearing tells a different story. So how do I quantify that and qualify that? Yeah. So I'm definitely open to any any insight on how to tell that story, because I struggle with that one as well. I didn't introduce myself. Like, I Rosanna. I'm from Adobe, and I run a team called insight and discovery experiences. So my team does enterprise search. It does knowledge management. It does insights as well. So, we manage the Medallia and Qualtrics implementation too for Adobe, and that's kind of newer in my group. So I'm learning still about, like, what kind of metrics you can get from listening from employee listening. For search, and I guess for all of our platforms, we not only look at the performance, but the outcome of, like, what we have there. So are people actually saving time, or is the effort lower than it was before? So, Colin, when you said, you know, we're not using MPS, good job on that. Satisfaction is definitely much better for a workplace environment. You might also think about asking in those surveys, like, how hard was it for you to find what you needed, and then you get an effort score too. Because I think that's what we're trying to get down to when people say, I want this to be like a Google search. They want it to be easy, basically. And that effort then kinda correlates to the time that they're spending too. We kind of look at it, like, holistically. If the search is performing well, then people are finding what they need in the end. And we know from, like, UCS research that if people are able to find what they need when they get to the content, they appreciate the content more as well. So, like, the sentiment on the content too will go down if they're having a hard time finding it even if it's the same thing. So we look at the sentiment on the content itself and the usage of that content too over time to kind of give us an indirect, indication of whether searches look working well and then looking at, like, the referrals that are coming over to that content. Are they going directly, or are they going through search? And I can tell you, like, we use ServiceNow also before we had Kaveo on ServiceNow. The, like, accessing the content was half of what it is today, like, putting Kaveo on there. So we know that that helps, and over time, it's going up. So, I would look at some of that stuff too just to get, like, a whole picture. You're right, Brian. It's like, you got some data, but you need to have people's stories to kinda validate that too. Yep. Yep. I see there was started on data. I do have a question actually about data. So, Wendy, you were talking about the metric that you created, for search. I'm curious to see what that looks like. You know, like, if it's is it a number, or what does that look like, and how do you report that out? It's a numeric number, because it involves, processing of machine learning model. We set a pipeline to calculate it on the schedule and report that, we can report it as trend or whatever we want. And, because it's based on IMR, which is a traditional method, the good thing about it is that, if, for example, user are consistently clicking the third item, it's not a bad search performance. Right? And the MR will only be thirty. To get to one hundred, we have to be cons consistently clicking the top items. So using this metric enable us to have more drive in terms of, keep improving my our performance because even though people are clicking the second item, which is, not too bad, we're only getting fifty, and we are aiming for higher and higher. So that's something, that we deliberately why we deliberately choose this, using MR as the base calculation. Okay. I'm I'm not familiar with that, base calculation. So Mean reciprocal rank. So Okay. So then this is where you sort of calculate the position of the click. So Okay. First position gets the highest score, second position gets a lower score, third position, fourth position gets even lower and lower and lower. In fact, it's exponentially lower. And so it incentivizes those top clicks. And and for a long time, when it was, like, search and links, you know, keyword search and links, that worked really well. But as we start to, you know, introduce the answer on the page, it just falls apart immediately. You know? And so we're starting to we're starting to account for some of that in this developed metric. But mean reciprocal rank is about the position of the click. Your your a perfect score is a one or one hundred in height. It's usually a fraction of one. Awesome. Thank you for explaining that. How is it different from, the click rank? Well, click rank click rank only calculates if you, click. Right? Right? Click rank doesn't calculate anything if there's no click. MRR counts all the zeros, you know, counts all the zero clicks, which kinda has the opposite effect, which is you're discrediting a valuable search, in that in that situation. So we have to kind of figure out the the balance between those. And I think, that's a brilliant solution for AI. Right? Suggestions, recommendations, a chat GPT, like, answers on the screen. You don't have to click. So how do you measure that? Yep. Yep. Yeah. I mean, our metrics are going straight out the window when that happens because because then you have to infer, did they get mad and walk away, or did they submit a case, you know, or did they not submit a case? Were they happy about it? Like, we're we that's gonna be really hard for us to measure. We're in the process of implementing smart snippets, and I've been asked multiple times, how do you report on the usage of smart snippets? And I've never been able to answer that question. So it sounds like it's not a known answer at this point, really. I know your eyeballs. It's it's about the decrease. I mean, really what Rosanna said, it's like, you're you're paying effort and then decrease in, like, case, you know, case creation around those solutions. So you're you're looking for the the, you know, the things around that that that snippet or that tool or that piece of knowledge, to indicate whether it's working. And there are actually some metrics, out of box from Coveo regarding interaction with smart snippets. You can create dashboard on that. Yeah. Yeah. That's fine. I do want to add the top of the difference between average click rent and MRR. Average click rent, we think, is not as good as that. If it's clicking from it's improving from one point three to one point two, how good it really is. If we transition that to IMR, it will be something from fifty to sixty. So IMR is more biased to evaluate when the search engine is overly performing really well, and we want to get to the nineties to the one hundred. But, for the average click rank, since everything is evenly distributed, you cannot really have that drive or more precise insight regarding how much your search engine has improved. Well, yeah, well said. Thanks for that. Yeah. Thank you. We're close to time. So did want to really thank everyone for participating, asking your questions, and, we'll gauge whether you guys wanna do something in the future. Maybe you guys can come and present something to the rest of us to spark some conversation. But before we go, Colin, I do wanna ask, did you say you're rebuilding your own Internet using headless and composable? And did I hear that right? Why you can answer in thirty seconds why you guys are doing that versus, like, using We've added a bunch of out of box tools. Sorry, Rosanna. Even, Adobe experience manager and just didn't didn't make the grade. We have two we we have this sort of high brow taste for personalization, and design. Right? And so, really, it boils down to, our ability to to affect the experience. And with package solutions, things like LumApps are simpler. I mean, you know, you get what you get. Right? They're good. You get what you get. It it's not for us. Bigger solutions like, Liferay and Adobe experience. They're just like it's too much. Like, we we're already sort of have built this stuff for our own web experience. It's admittedly, it's for external web experience, which is much different than an Internet. So, you know, we're, we're we're working it out. But, all in all, we think that's the best decision for Intuit because we're building on these, internally grown tools. Yeah. Yep. So it it's gonna be a Headless composable is the future. We hear it everywhere. So, so yeah. Awesome. Well, thank you all for the time. We'll get you the recording. You have my email. If you have any questions, please do connect, and we'll plan for one of these in six to eight weeks. Yep. For the survey, you guys go through it as well. Happy to answer any questions you got. Thank you, Melissa. Awesome. This was great. Thank you. Thank you so much. Thank you. Take care. Bye.

2023 Digital Workplace Priorities and Metrics for Success

Make every experience relevant with Coveo

Hey 👋! Any questions? I can have a teammate jump in on chat right now!