Gene Roddenberry long ago set our expectations with natural language processing (NLP). With this gold standard in mind, how can you best add NLP capabilities into the enterprise?

The NLP market is rapidly growing; according to Statista, it’s expected to reach over $43 billion by 2025. This is a reflection of the value NLP can deliver. With the industry experiencing such large growth, professionals skilled in natural language processing are in high demand. Our own research found that 59% of enterprises struggle to find AI talent.

Are you an enterprise architect mulling over the decision, ‘do we build it, buy it off-the-shelf or rely on a SaaS provider?’ Here’s what to consider when trying to answer this question.

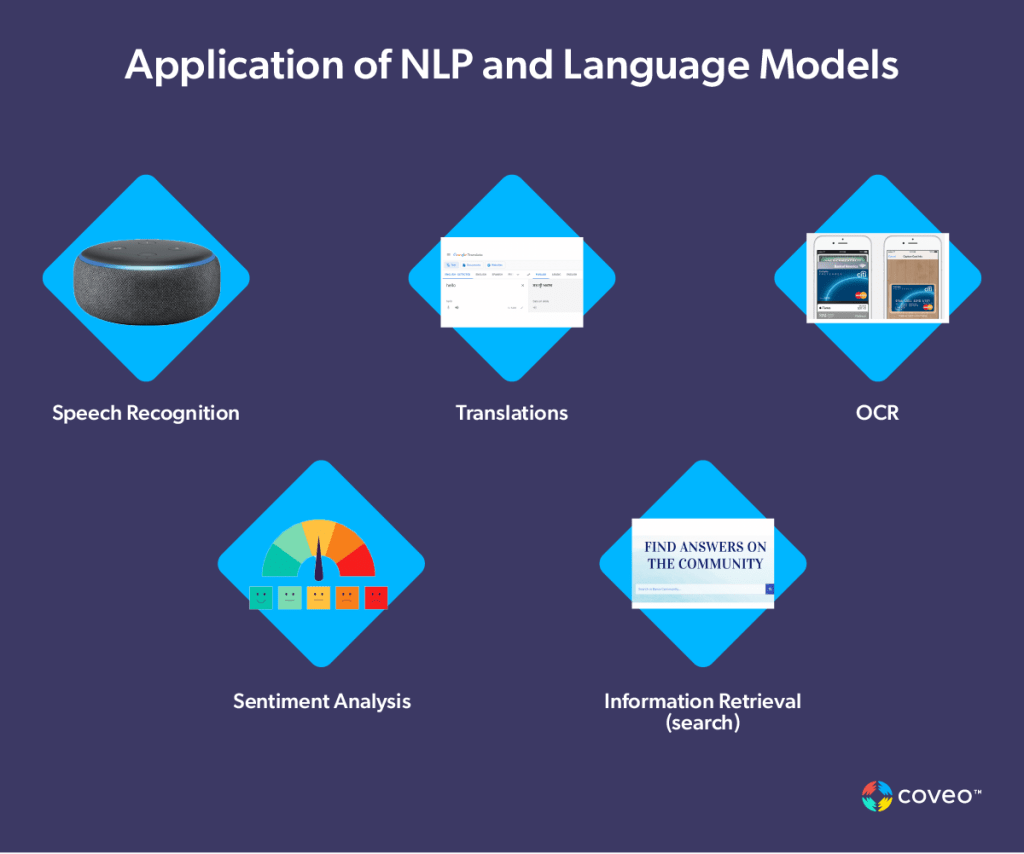

What are NLP’s Capabilities for the Enterprise?

An evolving area within the tech stack, NLP has a growing importance to enterprises. When handled proficiently it can improve all business processes that depend on human language.

NLP capabilities provide an easy “natural language” interface for humans to find knowledge, products, and services stored in digital systems. NLP includes both the input (query type) as well as the computer’s “understanding” of the question. Good NLP spans from finding what is explicitly stated to what is implicitly meant. It is the breadth and depth of deduction that separates good NLP from great.

There are several fundamental disciplines that must work together to ensure proficiency:

- Inputs/Interface

- Understanding and processing

- Inferencing and reasoning

- Outputs and applications

Let’s look at what you should expect to find in each area.

The NLP Stack

Tier 1: Natural Language Inputs

At the top of the stack is the input — be it spoken or direct text, the machine needs to understand what the human is asking. And of course, if spoken, the machine needs to understand the myriad variables in the human voice.

Fortunately, speech-to-text has been relatively-solved, the benefactor of 75 years of research. While there still are some issues (all humans don’t speak alike), performance continues to improve and costs of these technologies are in the rounding error range.

Tier 2: Natural Language Understanding

Next in the stack is natural language understanding. This is where the computer must parse the text — sometimes with the help of syntax and tagging (semi-structured content).

The more verbose the tags (XML vs JSON), the easier it is to parse the text, pulling out entities and categories. Again, this area of expertise is pretty good, with machines now diagramming sentences surprisingly well. Maybe even better than most 6th graders!

Tier 3: Inference and Reasoning

This is the area where the greatest gains still need to be made. The challenge is the amount of computing power necessary — as well as neural networks for deep learning.

Here the challenges become quite stark. It is far easier to create models that require less human intervention than it is to create a computer that thinks like a human. For example, we can create models that deduce that, when a person writes “offspring,” she also means “children.” This eliminates the very human and arduous task of manually building a thesaurus or dictionary.

The precision is dependent upon the types of filtering you are doing, the models used, how much structure is in your content, and whether or not the models were supervised. Ten-thousand queries a month on whether or not a [spouse] [wife] [husband] [partner] is eligible for a benefit will train that model very quickly.

On the other hand, if I want the computer to determine the best policy based on my marital status, that requires a different level of inference. This is when deep learning comes into play.

Off-the-shelf AI offers a tremendous leg up. In terms of time, talent, and cost, it’s a huge savings over investing in R&D to create models and then having search admins put them into operation.

Tier 4: Deep Learning

Deep learning requires tremendous algorithms and computing power. Data is passed through a network of neural nodes, to attempt to learn as much as possible. Here’s a great explainer on how neural networks work.

The bottom line is that while neural networks offer a powerful way of capturing learning, they are highly dependent on people for structuring them, providing effective training data, and defining effective back propagation algorithms.

Some of the challenges of deep learning have been that it isn’t always understandable how a computer has deduced a given situation. Like humans, neural networks can take pathways and bypass certain pieces of information, resulting in undesirable conclusions.

A great example is a piece of AI used for sentencing prisoners. The model determined that people who are poor or don’t own their own home are more likely to commit a crime than those who do, and would thus be given harsher sentences. But correlation is not causation. And testing for such biases are necessary when developing applications in order to stem distrust.

Or for an even more dramatic example, the time when the state-of-the-art GPT-3 AI recommended that a simulated mental health patient commit suicide.

Tier 5: Outputs and Applications

The final part of the stack is determining the output. What are the best applications for your natural language stack?

According to Gartner, applications to date offer a broad set of capabilities that can be

- A stand-alone, commoditized capability

- Multiple functions that are combined to deliver a targeted solution or platform

- Functionality embedded in existing solutions

Natural Language Search

With all of those considerations, it’s not surprising that NLP in the enterprise has taken root around search functions — and in those areas where finding the right information quickly impacts the top or bottom lines.

Which is why natural language search has become a must-have in customer service — both in call centers and in self-service models. Too, it is becoming increasingly important in ecommerce.

NLP and Knowledge Strategy

Beyond the actual search terms, the context of the user matters. The internal purchaser of the aforementioned laptop versus an external customer, for example, are quite different and bring different assumptions to the table. Incorporating context like the user’s role and geographic location helps to fine tune the raw NLP techniques.

The ability to bring such information to bear is key to excellent search experience and goes beyond NLP per se. The ability to enhance NLP with other techniques is essential.

Coveo uses NLP techniques to analyze submitted queries in order to provide an optimal balance for a “search-oriented” language processing. As a search technology, we have to take into account that, while some queries can be lengthy, the average query is only 2.5 words long — not that much to perform NLP. To go beyond this in providing a great digital experience, Coveo uses ML and other methods.

As Coveo’s Director of R&D Mathieu Fortier says, “Coveo uses many techniques to ensure that the most relevant results are presented to the user based on available context, including NLP/NLQ techniques.” NLP is one important element in an overall approach to effective enterprise knowledge management that does search right.

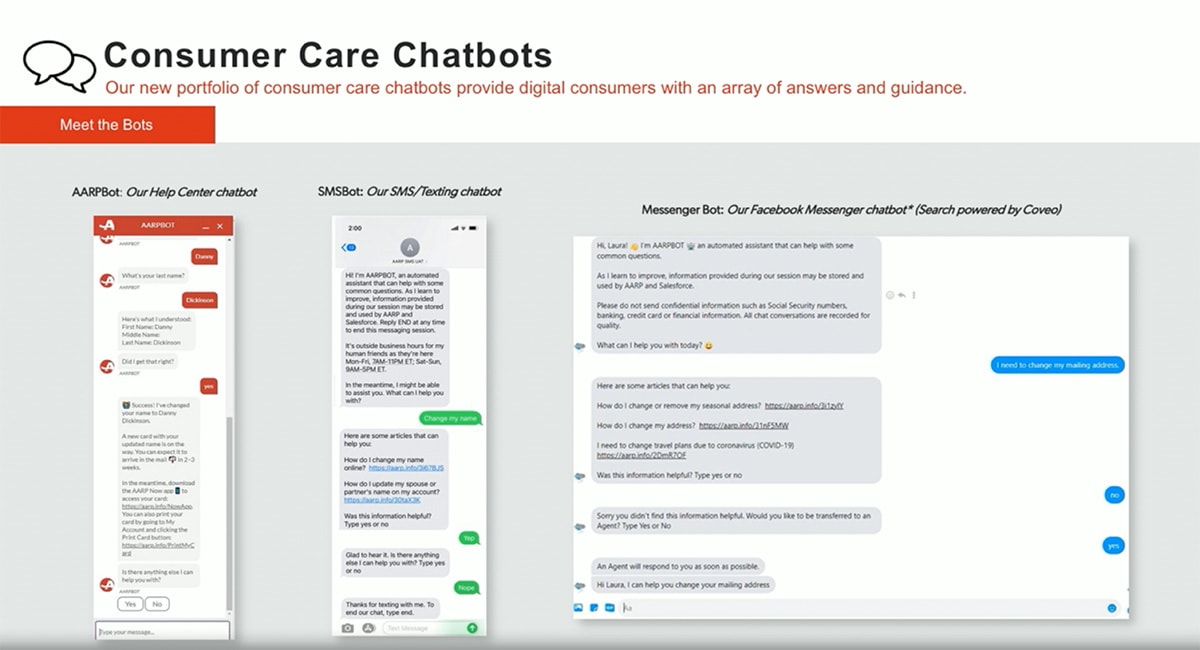

Separating the Hype From the Reality

Much of the hype surrounding NLP comes from chatbots (aka conversational AI). Basically, the promise was that of replacing human service agents with NLP-enabled software for a paradigm-shifting boost in efficiency. The reality for many has been something less wonderful, and users turned out to be readily able to distinguish between real and artificial support, resulting in widespread discontent.

Nevertheless, effective implementation of chatbots is an important tool in the evolving landscape, and any business that just ignores it entirely will be at a severe disadvantage. The key is to deploy chatbots in the right ways to improve self-service and time-to-resolution by better identifying what the user needs.

Organizations like AARP have embedded chatbots in various channels on their help sites. The bots use Coveo to leverage the knowledge and retrieve relevant and high value content.

Effectively incorporating NLP into the enterprise is a big, nuanced effort. It requires a good understanding of business processes, NLP, and AI/ML, among other techniques. To get a grip on this effort it’s often best to involve an experienced partner.

Modern NLP-enabled solutions should be able to form an accurate, actionable portrait of the organization, its information and its users. This portrait should be dynamic and responsive to changing conditions.

Dig Deeper

Still on the fence about investing in NLP? Join search experts from Adobe, Data Science Central, Perficient, and Coveo to get a better understanding on how NLP impacts — and improves — your users’ search experience.