We’ve discussed how relevance ranking in search is being impacted by machine learning – and continuous learning.

[If this is a new concept for you, here’s an off-ramp to catch up quickly on search relevance.]

Still, search relevance seems like a tricky topic; what’s relevant to you when it comes to the term ‘cartoon’ may be leagues different from what’s relevant to me. For example, Futurama has been one of my comfort shows for a long time. In fact, there’s a scene that encapsulates the concept of search relevance pretty well.

In the last episode of the first season, Fry is the lucky contestant to find a golden ticket bottle cap that wins him a trip to the Slurm bottling factory on Wormulon. It’s there that the whole Planet Express crew both get to party down with Slurms McKenzie, and find out the horrifying secret ingredient that makes Slurm so gosh-darn addictive.

Before that, though, the eternally clever writers behind the show treat us to some speculation as to what that need-to-know-level information might be.

“Maybe it’s people!” Fry gasps, clutching his umpteenth Slurm can of the day close to his chest.

“No, there’s already a soda like that,” Leela responds nonchalantly. “Soylent cola.”

“Oh,” Fry says, relieved. Then he asks, “What’s it like?”

Leela shrugs, and quips, “It varies from person to person.”

A riff on the 1973 science fiction cult classic Soylent Green, Leela’s joke also neatly summarizes the difficulty behind the concept of ‘search relevance.’ It’s contextual—you know it when you see it.

But, per Peter Drucker’s 1954 management by objective model, we need a way to show that we’ve achieved relevance in our search engines.

Measurement has its goods and its bads (personally, I’m glad HVAC was invented before management became obsessed with measuring everything—though we could make it a tad less cold in the summer months). Really, it’s all about goals. It’s hard to define a path forward without a target to aim for.

The difficulty of relevance is that, since it’s contextual, what kind of goal can you establish around something so ephemeral?

Well, just like CSAT and ESAT, maybe we can establish an RSAT (Relevance Satisfaction Score) that can help us bridge the gaps between long-standing metrics used to evaluate the effectiveness of a search.

What are the Different Measures or Metrics for Search Engines?

Namely, precision (the fraction of search results that are relevant to the submitted query) and recall (the ability of a search engine to return results relevant to the submitted query), where the tension between the two can sometimes equate to relevance.

After all, search relevance solves real problems. Employees spend2.5 hours every day just looking for the information needed to do their jobs. This dominoes into longer hours spent completing projects, which contributes to burnout, which leads to turnover … You get the picture.

And what about customer service? Forty-four percent of customers said that the inability to find information or getting conflicting information is a negative enough experience to convince them to abandon a brand. CSAT scores alone can be improved by implementing self-service paths that empower customers who want to be able to handle their own repairs (look at the recent Apple policy changes if you need proof).

Ecommerce seems like a no-brainer: people can’t find what they can’t buy. But did you know that 90% of consumers expect online shopping to be equal to or better than an in-store experience? That’s a high bar.

So with all of this in mind, clearly search relevance is important to attain. But, again, how do you know you’ve reached those dizzying information retrieval heights?

A Framework for Measuring Search Relevance

First things first: just like the problem everyone in the search industry is trying to solve (that is, matching search results on a one-to-one level for the individual searcher rather than monolithic personas), there isn’t a single formula that you can use to measure the effectiveness of your search engine.

Instead, like a gold prospector, you’ll need to surface some halcyon-hued insights from your users and the content they’re consuming (or want to consume). Many cognitive engines today are built with equations and user analytics metrics galore for evaluating whether a result truly answers a user’s search query.

Cumulative gain can point to the sum total of a content piece’s relevance scores, while click-through rank can show you how many clicks a piece of content receives, regardless of where it ranks on a results page. These kinds of metrics can paint a picture of how users are interacting with your content—but they are tied to what content is available (i.e., what’s in your index) and the quality of the content itself.

How to Measure Search Relevance?

The most important first step is establishing what you’re trying to measure. Are you trying to understand why a majority of shoppers don’t become return customers? Or how about why you know a particular project document exists—but why it doesn’t get used more often?

Understanding what relevance means for the audience you’re trying to reach will give you pretty big indicators of what metrics are valuable for measuring progress toward that goal.

Here’s a potential framework for an RSAT score: start by establishing the types of searches you observe within your digital presence. For our framework, we’ll start with task-oriented searchers (those who are trying to complete an assignment or fix a problem) and delight-oriented searchers (those who are diving down the internet rabbit hole on an information-filled adventure).

From there, we can divine what measurable variables are useful. So, for our examples, the following might apply:

| SEARCH EXPERIENCE | GOAL | METRIC VARIABLE |

|---|---|---|

| Task-Oriented | Speed to destination |

|

| Delight-oriented | Discovery |

|

The right combination is going to depend on your use case and your goals; for example, dwell time could be important in a workplace or service context. A low number might mean a user clicked on a result and then bounced back to the results page because they weren’t satisfied with their findings.

Or it could be a good sign that the user found what they were looking for quickly and completed their task, especially if you have a feature like smart snippets implemented in your search engine.

Click rank can likewise be a mercurial metric: for example, it’s not as useful in a commerce use case because shoppers like to browse. They’ll scroll and evaluate a few options (unless of course they know exactly what they’re looking for and can input an exact query or know how to navigate directly to their desired result). This can lead you to pull out your hair if you think your results are poor because shoppers are constantly clicking on items listed lower in ranking.

Choosing the right set of metrics is going to depend on your business, your goals, and your users’ goals. Keeping these in mind will guide you to the evaluation methods that operate best for what you’re trying to measure.

You can investigate different search experience designs and metrics with A/B testing to see what fits best with your target audience.

Search Metrics: A Choose Your Own Adventure

Perhaps the very first step before deciding what metrics to use as guardrails to measure your search relevance is understanding where your current search experience lands.

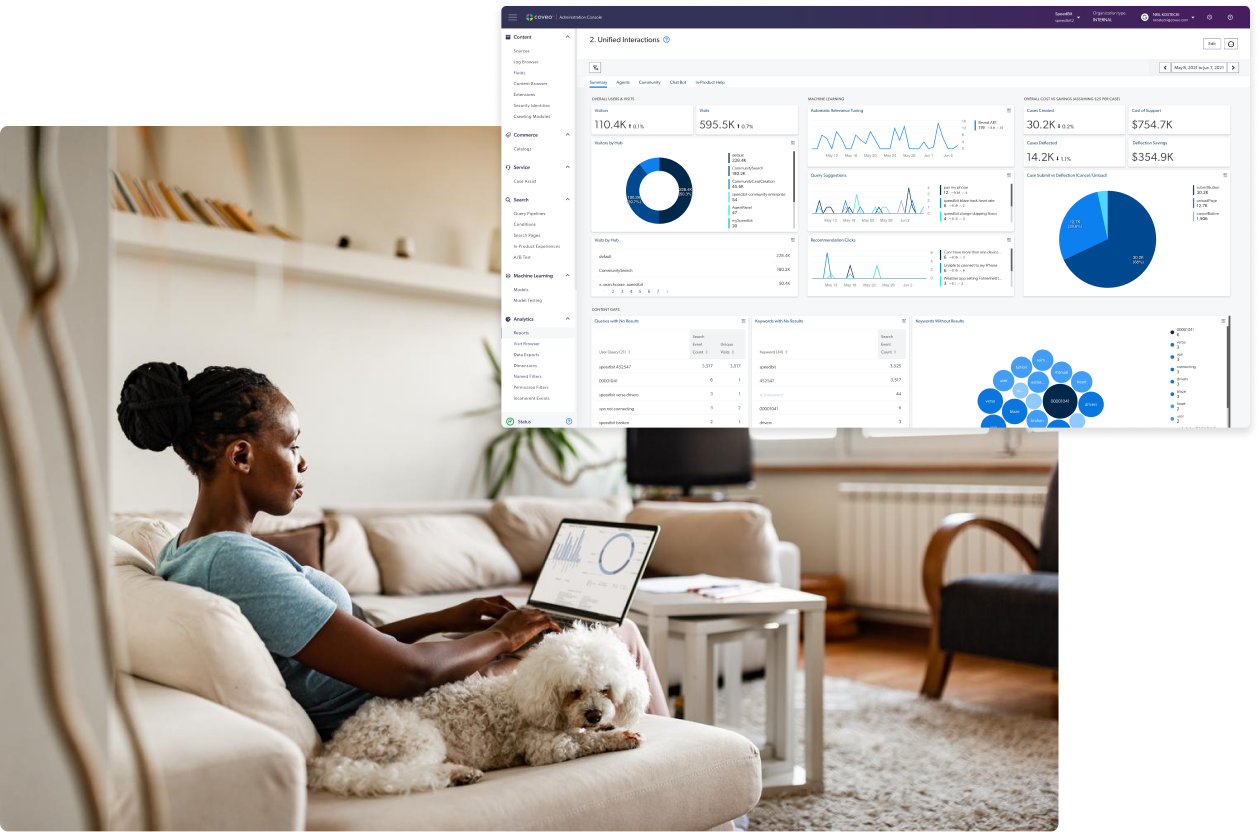

Enter: Coveo’s Relevance Maturity Model. Our white paper goes in depth on what relevance really means in the search industry, how to discern where your organization currently sits within this framework, and what steps are necessary to increase your relevance — and your bottom line.

Dig Deeper

Our ROI calculator draws on real-world customer outcomes to project how our powerful AI can help your business. Give it a try!